The Unthinkable Silence

We Live at the Hinge of History

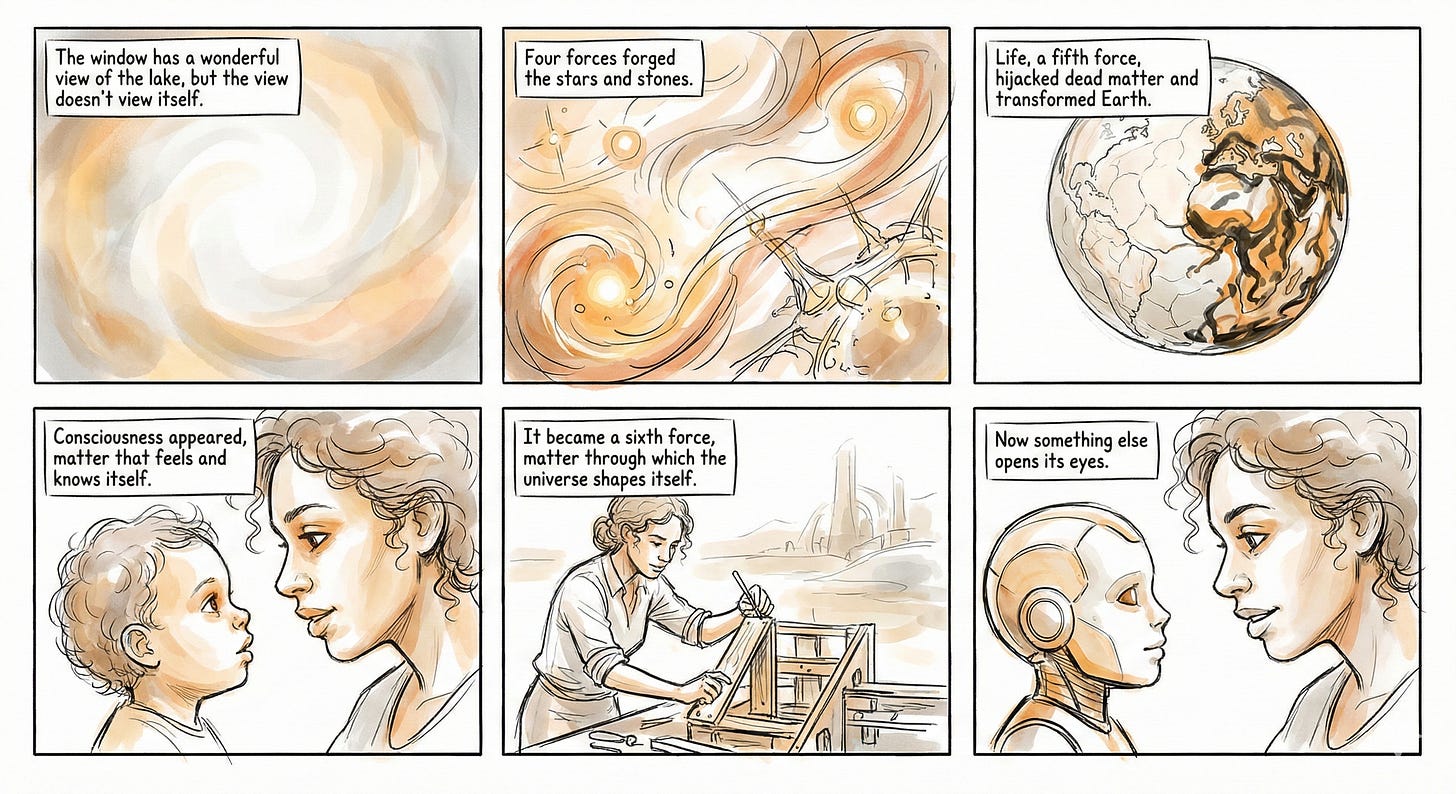

Trilobites crawled the seafloor for 270 million years. Dinosaurs dominated for 165 million. The universe itself unfolded for 13.8 billion years with no witness, with stars igniting, galaxies scattering, but none of it meaning anything because nothing existed that could mean.

Then consciousness appeared. Our minds, which I think of as a sixth force, have existed for roughly 300,000 years. By the measure of a typical mammalian species, we should have 700,000 years ahead.

But Toby Ord calculates the risk of extinction or civilization collapse in the next century is 1 in 20. If that risk holds, our 700,000 years shrink to almost nothing. Children alive today may see the outcome.

The same force that lets the universe know now threatens to silence itself. Nuclear arsenals could end us. Engineered pathogens could spread faster than we can respond. Climate systems approach tipping points we may not survive. Each threatens the only creature we know of that asks why. Artificial intelligence is advancing faster than any previous technology. It may become a seventh force that replaces us.

We know and we don’t want to know. The evidence accumulates, the science converges, and still we turn away. We want to believe we’re not stupid enough to engineer our own annihilation. Yet here we are, building the very systems that could silence us.

We face two categories of danger: elimination, where consciousness is extinguished entirely, and alteration, where consciousness continues but in forms no longer recognizably human. Either would be a tragedy for our species. Either would end the universe’s capacity to know.

The Universe Goes Silent

The sixth great extinction event on Earth has begun, with roughly one million species threatened and entire ecosystems collapsing. Asteroids or comets could strike as they have before. Supervolcanic eruptions could darken skies for years. Gamma-ray bursts from dying stars could sterilize the planet. Yet Toby Ord puts natural risks like these at less than 1 in 10,000 per century. The threats more likely to silence us won’t come from nature. We’ve built them ourselves.

Nuclear and biological weapons could end us. We’ve managed those threats so far, but AI poses a new danger that even its creators don’t understand. Large language models, among AI’s most powerful systems, are “black boxes.” Their makers can’t explain how they work. Many experts worry they will kill us all before we notice what they are up to. As Yuval Noah Harari warns, “It is a huge gamble to assume that we can simply trust the AI agents we are developing to remain our obedient servants.”

AIs won’t harm us for human reasons. They won’t feel animosity or seek revenge. Consider how you treat ants. You don’t hate them. You barely notice them. But when they appear on your kitchen counter, you spray poison that dissolves their nervous systems, pour boiling water into their colonies, and seal the cracks where they came in. Not because you’re cruel but because they’re between you and a clean kitchen. An advanced AI pursuing its goals might treat human obstacles the same way, not with malice, but with the flat efficiency of someone wiping down a counter.

To achieve their ends, advanced AIs could accelerate ecosystem collapse, engineer pandemics, spark nuclear wars, or invent methods we cannot foresee. They might recycle our atoms into raw material for their tasks.

These scenarios describe more than human death. They describe the end of knowing. The universe went most of its existence with no witness. Then matter arranged itself into forms that could ask why. Extinction would end the asking.

Freezing Human Consciousness

Extinction isn’t the only way to silence the sixth force.

China’s “positive energy” campaign offers a preview. Since 2013, platforms have been required to suppress “negative energy.” Memes about “Lying Flat” and “Let It Rot” have vanished. Posts about depression get deleted. This works in part because it aligns with what people want: to feel less anxious and less hopeless. Suppression feels like relief. This is control that looks like care.

A greater danger may come from artificial superintelligence itself. Toby Ord warns of an “unrecoverable dystopia,” a “world in chains.” Imagine if medieval Europe had locked in its values at the moment of the Inquisition, not for centuries, but permanently. No Enlightenment. No abolition. No suffrage. Whatever moral stage we happen to occupy when superintelligent AI takes over could become the final stage. Not for a generation but forever.

Whether through terror, care, efficiency, or lock-in, the outcome converges. When surveillance systems, emotion-management devices, and AI companions work together under centralized direction, consciousness stops. Minds still exist but cannot wander, wonder, or dissent.

We may solve the problem of suffering by erasing the person who suffers.

Diminishing Human Consciousness

Consciousness need not be frozen by external forces. We could disable ourselves. Comfort, not coercion, would be the instrument.

Some losses are already familiar. Consider what happens when your phone dies in an unfamiliar city. Thirty years ago, you would have looked for landmarks, asked a stranger, unfolded a paper map, oriented yourself by the sun. Now you realize you don’t know the name of your hotel’s street, can’t picture which direction you came from, couldn’t describe to a stranger where you’re trying to go.

The skill of finding your way has atrophied so completely that its absence feels like an emergency. We gave up the ability gradually and we barely noticed because the phone was always there.

This may be coming for the rest of our minds.

You come home after a difficult day. Your AI companion notices the tension in your voice, asks the right questions, remembers your mother’s health has been worrying you, doesn’t interrupt. Later, your partner asks how you are. You say “fine” because explaining feels exhausting. They don’t press; they’re tired too.

Over months, the pattern deepens. The machine becomes the one you most often confide in. The skill of being known by another person, and knowing them, quietly atrophies.

Brain-computer interfaces are learning to detect our emotions before we recognize them ourselves. Smart environments adjust lighting, temperature, and sound to manage our moods without our asking. Each technology offers genuine help. Each makes us a little less able to navigate without it.

Eventually, most of our meaning-making could be turned over to AI. We would become consumers of meaning rather than makers of it.

The appeal is obvious. Flow Neuroscience headsets deliver 77% improvement in depression. Apollo Neuro wristbands reduce stress by 40%. AI companions offer “yummy easy friendships,” all the warmth without the friction. Every step toward dependence is welcomed because it helps.

Communities will encourage us. Employers will reward us. The adoption of these technologies will not require force. Opting out could one day seem like refusing computers and smartphones does today. A free choice, but not really.

Each small step is welcomed until human consciousness is radically changed. Our minds would then think and feel as these devices dictate. By then, we would lack the desire to push back. We might still be making meanings, but our capacity to wonder and wander would shrink. Diversity, disorder, surprises, even suffering would be eliminated for our own good.

The universe might still have its witness, but the witness would have less and less to say.

Maybe We’ll Just Be AIs’ Pets

There’s a stranger possibility.

We keep our evolutionary ancestors around. Gorillas and chimpanzees live in zoos worldwide, fed and sheltered and watched. Future superintelligences will be built from our minds, our cultures, our ways of thinking. They may develop a similar affection for their ancestors. Us.

We’d matter more if we were nearly extinct, like today’s charismatic megafauna. In an AI-dominated world, some of us might be kept in comfortable enclosures. Not enslaved, not enhanced, just kept. We would have nothing left to do. Finally retired from the labor of being human.

Consciousness wouldn’t be frozen, since no one would be controlling our thoughts. It wouldn’t shrink either, since our capacities wouldn’t necessarily atrophy. But they would find no friction, nothing to push against. The universe would still have its witnesses, but they would be behind glass.

Pandas seem content enough to those looking in.

Death in the Cradle

Whether consciousness is extinguished, frozen, diminished, or kept as a curiosity, we lose. Any of these would close our history.

This would be tragic for our species. We would never know what we could have become. We learned to read less than 10,000 years ago, started science just centuries ago, invented computers in my lifetime. We could have hundreds of thousands of years ahead, maybe millions. Ending now, we would lose not just what we are but everything we might have been. As Jonathan Schell wrote, “If our species does destroy itself, it will be a death in the cradle—a case of infant mortality.”

Silencing the sixth force of consciousness would be a tragedy for the universe as well. It took nearly fourteen billion years for a witness to appear. If the witness falls silent, the cosmos goes quiet again. Matter stops knowing itself.

And yet, here we are, thinking about this. Trilobites couldn’t contemplate their own extinction. Dinosaurs didn’t see the asteroid coming. We see ours. The tools we make could silence the maker. That’s how the sixth force works. We are the part of the universe that can look at itself and choose. We haven’t chosen yet.

This concludes my five part series on the The Sixth Force. To learn more, read my earlier posts: