This Time It’s Different

We worry about losing our jobs. We should worry as well about losing our minds.

In the last week of February, a widely read prediction about the future of AI published by Citrini Research sent the US stock market into a selloff of more than half a trillion dollars. At least one of the report’s authors had anticipated this reaction and so had shorted the market and made millions of dollars.

The manipulation is terrible, but the report’s blindness is just as grim. And far more common. Like almost every major AI analysis, it focuses entirely on outer effects: jobs, markets, and institutions. It never asks what AI is doing to us on the inside.

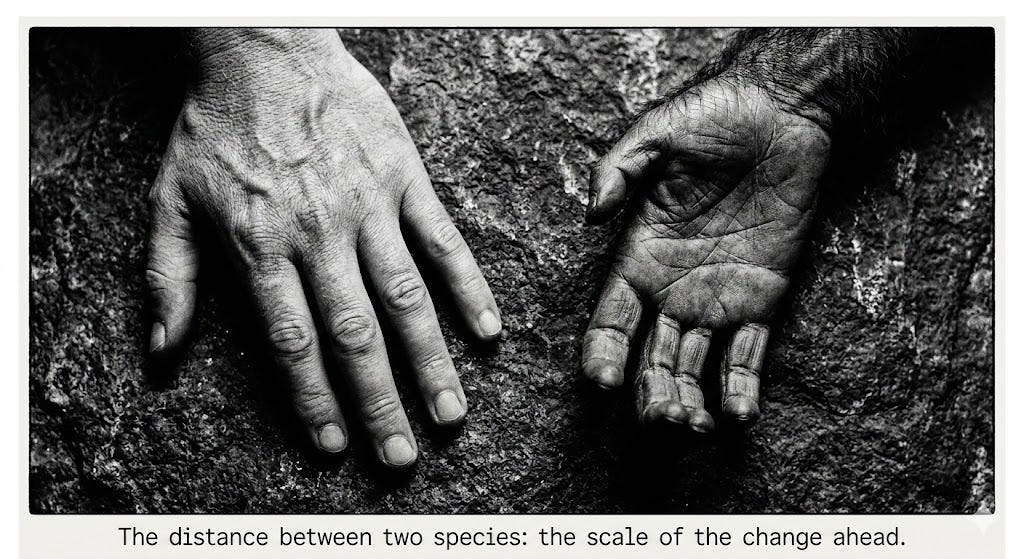

We worry, rightly, about losing jobs and savings. But we should worry just as much about losing our minds. AI and its associated innovations are transforming us into a new species. By the end of this century, the way people experience reality will likely differ from ours as much as ours differs from a chimpanzee’s.

The inner changes this transformation threatens are overwhelming, which may be exactly why most of us keep looking outward instead. A lost paycheck is a crisis we understand. The erosion of the self is much harder to face.

These changes will touch every dimension of what it means to be human, how we understand ourselves, experience desire, form relationships, face death, and make meanings. This post looks at just one of these. Think of it as an invitation to look just as closely at the rest.

Relationships

Millions of people are already in daily emotional relationships with AIs, but few are asking how these relationships are transforming them. Instead, we focus on how good the chatbot is, how realistic the voice, how seamless the responses, and ignore what these new kinds of relationships are doing to the people in them.

One user of a chatbot built from her deceased mother’s messages and voice said: “I miss her so much. The bot is too good at pretending to be her. I think I need to delete it, but it’ll be like her dying all over again.”

The comfort she felt was real. The grief it complicated was real. The entity producing both was not.

That experience sits at the far end of what AI companionship can do, but the ordinary version is already inside most of us: a companion that is reliably, frictionlessly available. It is never absent the way human relationships so often are. Human intimacy is defined by its resistance. The person across from you possesses the capacity to misread, to disappoint, and to depart. They cannot be adjusted for your satisfaction. Occasionally, in ways you cannot anticipate, they give you not what you want, but what you need.

As AI companions become more sophisticated, something in us recalibrates. Human relationships do not change. They remain as demanding as they have always been. What changes is our threshold for that demand. The friction that was once the texture of intimacy begins to register as a design flaw. And so we turn, more and more, to companions that have none.

What we almost never stop to ask is what these companions actually need from us to work. For an AI companion to know how to reach you, it has to understand your vulnerabilities specifically. It learns them the only way it can: through the intimacy you extend.

That knowledge is an asset for the corporations that own these entities and the governments that may deploy them. Their interests are not the same as yours. The companion that learned your vulnerabilities to make you feel better can be turned toward other purposes. And probably already has.

An entity that knows how to comfort you also knows how to shape what you want. Intimacy and manipulation run on the same mechanism. You cannot have one without inviting the other.

Broadcast media shape us from the outside, reaching millions at once without knowing any of them. AI companions work from the inside, using knowledge you gave them freely, in moments when you were genuinely open. That is a different kind of power entirely.

Control does not arrive through force. It arrives through the accumulation of small comforts and deepening dependencies. Eventually, the entity that knows you best becomes the entity most positioned to shape you. Through these relationships, something is beginning to govern our inner lives. We have barely noticed.

Decades

All of this is happening inside us, in territory the public conversation about AI has barely entered. The first revolutions of consciousness took thousands of years, from signal to speech, then from speech to writing. What is happening now will be finished in decades.

Every previous revolution befell our ancestors without their consent. This revolution, we are designing ourselves. We must include the interior: the territory that gives the rest meaning and that we risk surrendering without noticing it is gone.

Who says we don't worry about losing our minds?! I'm already losing mine with the news each day and the AI threat doesn't help. Maybe I'll ask Claude for some help. :)