We Are Watching the Wrong Machines

The AI that talks to us is not the AI that governs us

I got bamboozled: I started thinking of AI mostly as an agent, copilot, therapist, and companion, exactly what the tech companies want. I even wrote a post called “A Billion Intimate Strangers.” I was proud of it.

I was wrong.

Chatbots work as a conversational costume, a human disguise pulled over a machine. For every chatbot answering a question, thousands of silent AI systems run unnoticed behind the scenes.

We fixate on the AIs that talk like us while the ones that govern us stay invisible.

Machines Without Mouths

Silent AIs steer our experiences with search engines, social media, and email, giving us recommendations for movies, products, and dates. Without speaking, they adjust our thermostats and security cameras, coach us with fitness tracking, nutrition advice, and symptom checking.

Yet other silent AI systems sort and judge us. They score our creditworthiness, approve or deny our loans, and screen our résumés before any human sees them. They flag us for fraud, calculate our insurance premiums, and predict whether we will skip bail or commit another crime. They determine who gets admitted to university, who qualifies for a mortgage, and who gets extra scrutiny at the border.

Silent AI manages the planting, irrigating, and harvesting of much of the world's food supply. It navigates our planes, balances our power grids, and targets our enemies in war, even though it is humans who still do the dying.

We are watching the wrong machines.

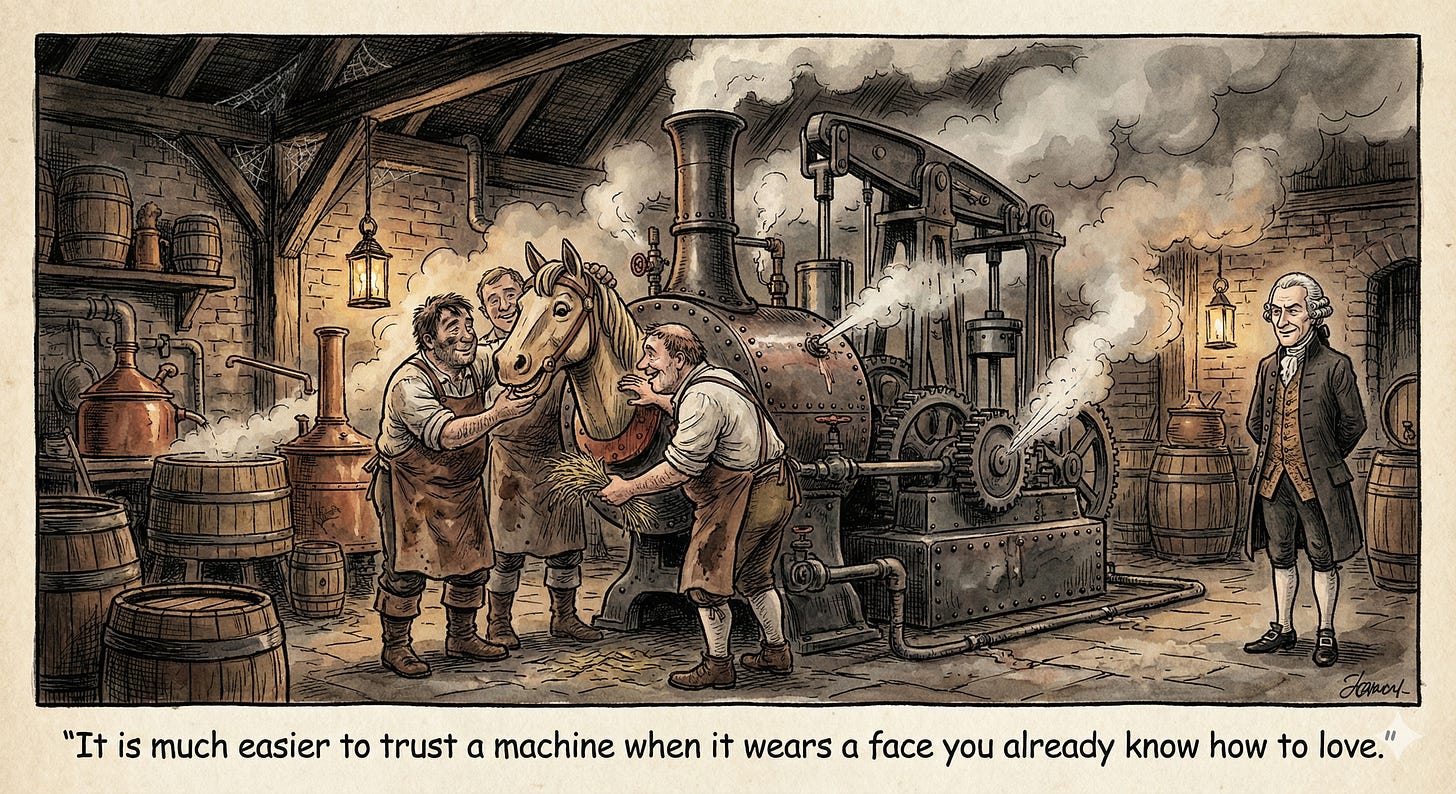

The Horsepower Trap

In the early 1780s, James Watt stood before a group of London brewers who were still powering their mills with teams of horses. He knew he would not get far by explaining thermal efficiency or mechanical output. So he made up a word: “horsepower.” The brewers knew horses; they watched them circle the mill shaft 144 times an hour. The horse became the unit. The steam engine became a better horse.

Silicon Valley borrowed the same trick, disguising AI as a creature we already know how to relate to. The steam engine never pretended to be a horse; it just borrowed the horse’s reputation. Chatbots, by contrast, imitate our language, remember our names, and ask how we’re doing.

Because they mimic us, we assume they are us. They are not. They are the articulate face of an immense silent infrastructure that does not know you exist.

Dazzled by Language

I picked the voice myself: calm, female, a little formal, a good listener not afraid to push back. The companies encourage this. They give these systems names like Siri and Alexa, with warm voices and helpful personas.

Soon these voices will live in robots with eyes, smiles, and gestures. Future systems will offer adjustable dials, to dial up the warmth, dial down the challenge, and tune the appearance of consciousness to your taste.

It’s not hard to fool us. Our brains are pre-wired for animism. Children everywhere have conversations with their toys; adults in all cultures experience “spirits” in objects. Put googly eyes on a rock and it seems to “come alive.” Give language to an AI, and it seems to come alive, too.

We are already debating whether AIs deserve rights and protection from torture. These debates focus only on chatbots, the AIs that remind us of ourselves. Few ask whether the systems that deny our loans, reject our job applications, or determine prison sentences deserve moral consideration.

The silent systems hold the power; the talking ones hold our attention.

Arguments that an AI isn’t “really” conscious will carry little weight once people spend hours a day with bots built to seem exactly as conscious as you and me.

The chatbot becomes a kind of ambassador, and we extend diplomatic immunity to its silent relatives.

Beneath the Costume

I catch myself doing it all the time: thanking my chatbot, apologizing when I close it abruptly. Give a toaster a voice and I’d probably apologize for overworking it.

There is nothing wrong with that. I’m one of the millions of people who have found real comfort and sometimes real insight in these conversations. The question is not whether to have them. It’s whether we can be comforted and clear-eyed simultaneously.

We are the first humans in history forced to deal with speech without a speaker, emotions without a body, and decision-making without a conscience.

The easiest strategy against that vertigo: believe there’s a person inside. It’s also the most dangerous one.

The divide is real, and it runs deeper than it looks. My memories are imperfect reconstructions, constantly rearranged as I move through time. An LLM’s “memory” is retrieval from a static database, not experience but calculation. When I remember something, I live it again. When the machine retrieves something, it computes an answer.

The chatbot answering your questions operates on the same principles as the systems calculating your insurance premium or denying your loan. One wears a friendly voice; the others don’t. The underlying logic is the same.

We navigate our computers through icons, files, and folders. None of these describe what is actually happening inside the machine. They are labels designed to make hardware navigable, a friendly fiction painted over silicon and code.

When my chatbot describes its “thinking,” its “researching,” or its “feelings,” I am looking at another misleading icon. The words are human. The process they claim to describe is not.

Mistaking your desktop for the machine underneath is a harmless convenience. Mistaking the chatbot for something fundamentally different from the systems that govern you is not. Strip away the costume. Look at the steam engine.

I agree that the silent systems deserve more attention. The chatbots grab our focus because they talk like us. But there’s another failure point in the middle: humans often collapse the distance between output and action when the output sounds confident. That interaction boundary may be where a lot of real mistakes happen.